|

| Source http://groverflanagan.blogspot.com/2008_09_01_archive.html Some Rights Reserved |

BC Hydro knows all about this, of course, they've been running an ad campaign for some time, trying to encourage change from the obvious (changing out incandescent light bulbs in favour of compact fluorescent) to the frankly unlikely (unplug your cell phone charger when you're not using it). There is a buzzword for the savings campaigns like this hope to realize: Negawatts. The cheapest kind of power to generate is power that the utility is already generating. More and more electronic devices are entering households, and something has to power them. If Hydro's choice is between promoting energy-saving consumer changes and building a new hydro plant, an ad campaign is cheaper -- though of course that presumes that it's effective.

Let's look at two quick examples in some detail: the cell phone charger and the compact-fluorescent light bulb. You can measure the energy consumption for these or any other device using an ammeter, which you can get from Main Electronics or elsewhere for less than $20 in the form of a very handy multimeter. (Rumor has it that you can borrow one from an organization locally as part of an energy smart self-assessment kit, but I can't find details at the moment.)

The cell phone charger's job is to convert 120VAC household power to something in the neighbourhood of 5VDC that a cell phone can stomach. And frankly I have to wonder why BC Hydro has set its sights on these so prominently -- they use an incredibly little amount of power when they're not active. See this video, for example -- typically < 0.1watts (although those are 220V appliances, the efficiency will be similar). If you're not familiar with watts (W), consider a regular 100W incandescent light bulb as your measuring stick. You'd have to unplug more than 1000 cell phone chargers to match turning off a single one of these light bulbs.

Even so, with over 34 million people in Canada, and with more than half that number owning a cell phone, we're talking about something in the range of a megawatt (MW), or one million watts, spent on idle cell phone chargers in this country. I'd make a back-of-the-envelope estimate from the numbers here that we're dedicating roughly one Canadian wind turbine to these. OK, it's not much, but keep in mind that this is probably the smallest wasteful device in a household, and regardless, it's still wasted power -- remember that this is just the amount measured from idling chargers, not active ones.

Let's take a look at the second example -- light bulbs. And this tends to be an excellent example of the complexity of power policy; you might think it's an easy decision, but even in this simple case, it's sometimes not.

A typical compact fluorescent (CFL) light bulb uses approximately a third of the power of a "similarly bright" incandescent (speaking about human eyes, of course, because some of the power savings arise because CFLs are more selective in the kind of light they emit). So at the highest level, going from 100W incandescent to a comparable CFL means an easy savings of around 70W -- or unplugging somewhere over 700 idle cell phone chargers. In the USA in 2001, lighting accounted for 8.8% of national household electrical consumption. Shifting that incandescent lighting to CFLs makes a very measurable dent on overall power usage.

That seems like an easy, effective savings -- and it generally is. However, the two technologies can't always be compared so trivially. It's often better to use an incandescent bulb in a closet or bathroom, for example, because CFLs don't take well to short use -- it decreases lifespan and doesn't give them a chance to run an their most efficient.

| |

| Credit: Anton http://en.wikipedia.org/wiki/File:Elektronstarterp.jpg Some Rights Reserved |

CFLs are complex, too -- like traditional fluorescent lighting, they require a ballast to drive the light bulbs, and in this case, it's a miniature electronic ballast built into a circuit board in the base of each and every CFL light bulb. Incandescent bulbs are evacuated, meaning that inside the bulb is a vacuum, but CFLs are filled instead with mercury vapour. These two issues make CFLs both more expensive to manufacture and trickier to dispose of. In terms of energy savings, this is called "embodied energy" -- the amount of energy that the device costs to make and destroy.

For a CFL, one estimate puts the cost at 1.7kWh -- almost 8 times the cost of an incandescent bulb. The break-even point, i.e. the amount of time at which the total production and operational costs for an incandescent equal those for a CFL, is approximately 50 hours. After that point, the CFL is cheaper on energy. (See the above link -- really. It's got lots of great information.)

One interesting characteristic of fluorescent lighting in general is that it takes more energy to fire it up than it does to keep it running. It also incurs more wear and tear. A lot of complicated devices behave this way -- cars, computers, etc.

Any of these technical minutia can make for unexpected human-level implications. I was in Ghana a few years ago, where the power grid is badly overburdened. Rotating black-outs made it hard to plan the computer-based workshop I was trying to run -- but even in places where the power was running, the power grid would sag in voltage far below the level it was supposed to supply (these episodes are called "brown-outs").

People had long since discovered that there was enough power to run a fluorescent light, but not enough to start one up -- so to ensure that they had light into the evening, when brown-outs typically occurred, they would run their fluorescent lights all day. Net result: people had lighting at night, but the lights ran wastefully through the daylight hours.

So I've rambled through BC Hydro's ad agency and the endless subject of compact fluorescent light bulbs, and even wandered through sub-Saharan Africa -- and this is supposed to be a nerd blog. Let me return to the subject of computers.

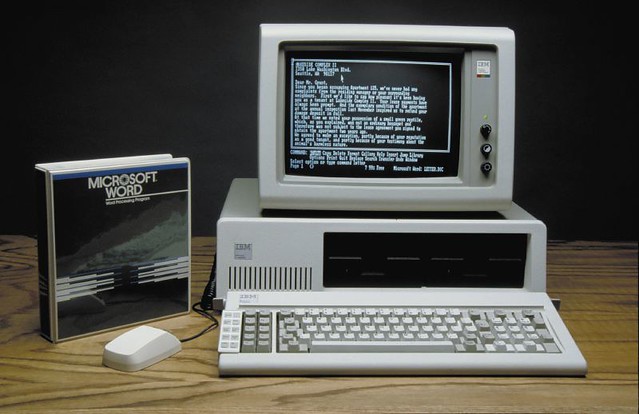

In the '80s, a household computer looked like this:

|

| Credit: dottavi http://www.flickr.com/photos/hexholden/251138522/ Some Rights Reserved |

Today, and leaving aside the growing popularity of laptops, a home computer might look like this:

|

| Credit: DandyDanny, http://www.flickr.com/photos/dandydanny/498986555/ Some Rights Reserved |

In the newer setup, neither the tower and screen are ever really turned off. (And to be clear: my interest here is in the power used by the device when it's not being used, i.e. purely wasted power. A CRT monitor as pictured in the old computer uses a lot more power than an LCD as pictured in the newer computer. But that's not what I'll be talking about here.)

The tower's power supply and motherboard are always semi-awake so that they can provide power to peripherals that might want it, and so that they can react to those peripherals' demands for further action and attention. For example, the computer might be configured to start up when there's activity on the network, or at a certain time of day, or when the space bar is pressed on the keyboard. Practically speaking, however, I've never seen any of these options used.

The monitor is never turned off because using a real power switch would provide a lesser esthetic experience. Translation: it wouldn't be as pretty, so we'd rather waste power.

Both the newer tower and the newer monitor have low-power modes and probably meet numerous efficiency standards, but it's impossible to match the efficiency of a device that's fully turned off.

The battery-powered keyboard and mouse are themselves fairly efficient devices, but they do rely on battery power, which has an extremely high embodied energy and generally relies upon toxic chemicals, and requires a charge/discharge pattern of use which is itself fairly wasteful. Is it really that important to forgo a wire to each when the environmental cost is so high?

Furthermore, in a modern computer setup there are lots of devices that aren't in the picture above but are probably lurking behind the desk: a cable or ADSL modem, a wireless router, and a fistful of wall warts for all the small peripherals people tend to have, like external hard drives and USB hubs.

How does it all total up? Here's a good general table for estimating, with measurements both for active and inactive devices. I'd guess that the above system, powered off, will use about 10W assuming there's a household Internet connection of some kind and not costing in the battery-powered equipment. Maybe that doesn't seem like much, but remember that this is with the device turned off.

Again, this is totally back-of-the-envelope stuff and makes a number of assumptions, but for the sake of discussion, in December 2004, 71% of Canadian households had computers. According to the 2006 census, there were 12.4 million households. Computer ownership and number of households have both increased since 2004 and 2006 (respectively), and we're assuming one desktop or tower computer per household within that 71% ownership statistic, but this gives us a comfortable lower bound of 8.8 megawatts wasted by Canadian computers that are turned off.

That's a lot of wasted energy -- and not wasted by bad consumer habits (in fact, this level requires good consumer habits) but by wasteful design on the manufacturers' part.

Let's look at some proper calculations done by people who don't make rash assumptions at quite such a high rate of knots. There's a good summary here, including the following:

- Estimates for the total vampire power (i.e. wasted by inactive devices) consumption of the USA are around 12% of household consumption. (Remember, the entire lighting bill of the USA came to around 8.8% according to the previous discussion.) That's a phenomenal amount.

- Plug-in load is the fastest growing category of residential and commercial energy use in North America.

There are several makes of smart power strips that automatically switch several of the plugs on and off based on how much power a "control" plug draws. If you plug a computer into the control plug, the power bar will switch the others on and off according to whether the computer is on. But generally I'm cynical about adding more technology as a solution to problems caused by too much technology -- you can also pick up a cheap vintage power strip for a dollar and just use the $@*& switch.

I think the solution is for stricter standards to induce manufacturers to do the job properly. Devices have generally gotten a lot more efficient in operational terms -- mobile computing has driven a lot of this, in its quest for smaller sizes, longer battery life, and less waste heat. The technology is good enough, but lazy engineering in things like plug-in peripherals needs to be targeted.

I'd also suggest that electricity needs to get more expensive. There are tons of improvements in both behavior and choice that all of us can tackle -- and I'm definitely part of that problem. I tend to leave my laptop running far more than necessary. Fortunately, like many of us, I'm cheap and if I can see savings I'll change my evil ways.

This leads me to a final and often-forgotten quirk about the quest for energy efficiency. Where does all the wasted energy go? Thanks to the law of conservation of energy, it can't just disappear. Typically it escapes as heat. Last winter my apartment was heated by electric baseboard -- an awful, wasteful way to heat an apartment, but something that's beyond the control of a renter like myself. In that situation, I can swap light bulbs and pull jacks until I'm blue in the face -- but every watt that I save that way means one less watt of heat generated, which means my electric baseboards will have to work one watt harder. It's a zero-sum game. My solution: plastic lining on the windows, an electric blanket, and a vigorous exercise regimen of fist-shaking at my cheap landlord.

(Update 2011/06/28: CBC's "On The Coast" had a segment on vampire power today. Check out the podcast. -AS)

No comments:

Post a Comment

Note: Only a member of this blog may post a comment.